算法模型应成为人工智能(AI)侵权审查的核心——以扩散(Diffusion)模型和算法为例

Algorithm Models Should Be the Core of Artificial Intelligence (AI) Infringement Review—Using Diffusion Models and Algorithms as Examples

算法模型应成为人工智能(AI)侵权审查的核心——以扩散(Diffusion)模型和算法为例

Algorithm Models Should Be the Core of Artificial Intelligence (AI) Infringement Review—Using Diffusion Models and Algorithms as Examples

一 引言

人工智能(AI)技术的快速发展和广泛应用,正在深刻改变人类的生产方式和生活方式。在文化创意领域,AI技术也被广泛应用于音乐、绘画、文学等创作领域。例如,AI生成的音乐、绘画和文学作品已经被公开展示,甚至出现在拍卖会上。然而,AI技术也带来了知识产权保护方面的挑战。与传统文化创意领域不同,AI技术生成的作品背后存在着复杂的技术算法,从AI技术的训练到最终作品生成,都离不开各种算法的运用,而如何判断基于这些算法的AI生成作品(AIGC)是否会涉及知识产权的侵犯,已经成为一个亟待解决的问题。

本文将从机器学习算法模型的角度入手,探讨如何审查一项AI技术生成的内容是否侵权,并以使用扩散(Diffusion)模型和算法的AI生成图像软件Stable Diffusion(稳定扩散)为例,探讨“判断AI作品侵权问题应以机器学习算法模型为核心”的重要性,为判断AI作品是否侵犯知识产权的工作提供一定的思路和参考。此外,本文只会探讨算法和模型的基本实现原理,不会研究其具体实现方法和数学公式等内容。

二 AI生成图像的历史介绍

自从20世纪50年代开始,计算机图形学就一直是人工智能领域的研究重点之一。随着计算机硬件的不断升级和深度学习算法的快速发展,人们开始探索如何使用人工智能技术生成图像。在20世纪90年代初,人们开始使用基于规则的方法生成简单的几何图形,这些方法包括分形图像和L-系统。但是,这些方法只能生成基本的几何形状,而不能生成复杂的图像。直到21世纪初,人们开始尝试使用基于机器学习的方法生成图像,“AI生成图像”这一概念开始进入大众视野。

21世纪以来重要的AI生成图像的历史大致可以分为以下几个阶段:

1. 深度学习带来的曙光

2006年,Geoffrey Hinton和他的学生发明了用计算机显卡(GPU)来优化深度神经网络的工程方法,并在《Science》和相关期刊上发表了论文,这是“深度学习”(Deep Learning)这个新概念首次出现在人们眼中。他们利用GPU的并行计算能力,将神经网络的计算任务分配到多个处理单元上并行处理,从而加速神经网络的训练和推断过程,这种方法大大提高了神经网络的计算速度,为深度学习的发展奠定了基础,同时也将AI生成图像的算力成本和时间成本降低到“可令人接受”的水平。

Geoffrey Hinton和他的两个刚毕业的的学生——Alex Krizhevsky 和 Ilya Sutskever

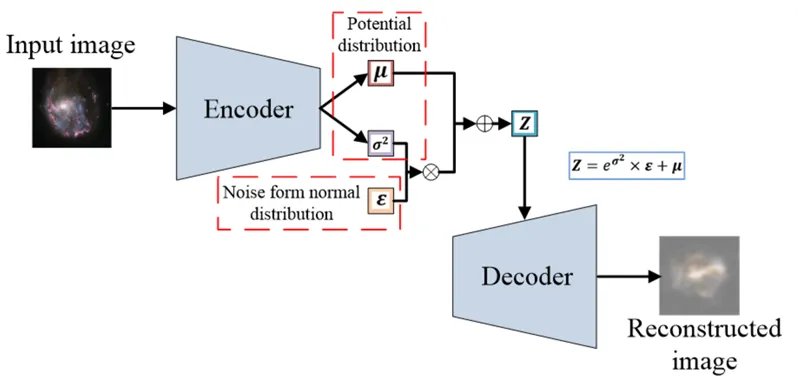

2. 自编码器时代

2010年代中期,随着深度神经网络的发展,出现了一些基于自编码器(AE)和变分自编码器(VAE)的文本生成图像模型,它们通常被用于无监督学习的场景,即在没有明确的图像标签或标注的情况下,从大量的图像数据中学习图像的特征和表示。自编码器是一种神经网络模型,由编码器和解码器两部分组成。编码器将输入数据压缩成一个低维向量,解码器将该向量还原成原始数据。在文本生成图像的任务中,输入数据可以是一个文本描述,编码器将其压缩成一个向量,解码器将该向量还原成一张图像。变分自编码器是一种基于自编码器的改进模型,它不仅可以压缩输入数据,还可以生成新的数据。VAE在编码器中引入了一个隐变量,用于表示输入数据的潜在特征,例如颜色、纹理、形状等,解码器通过隐变量生成新的数据。在文本生成图像的任务中,隐变量可以表示图像的风格或特征,解码器可以根据不同的隐变量生成不同的图像。

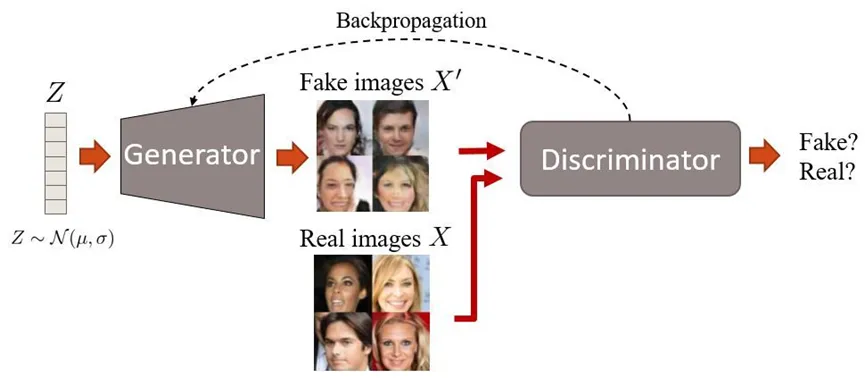

3. GAN——左右互搏的好手

2014年,Goodfellow等人提出了对抗生成网络(GAN),开启了一个新的时代。GAN由一个生成器和一个判别器组成,生成器接收一个随机噪声向量作为输入,并生成一张图像,判别器接收一张图像(可能是真实图像也可能是生成器生成的“假”图像)作为输入,并输出一个值,表示这张图像是真实的还是生成的。生成器和判别器通过对抗训练来提高自己的性能,生成器的目标是生成尽可能真实的图像,而判别器的目标是尽可能准确地区分真实图像和生成图像。在训练过程中,生成器和判别器相互竞争,不断调整自己的参数,直到生成器能够生成足够真实的图像,以至于判别器无法区分真实图像和生成图像。训练完成后,生成器可以接收任意文本作为输入,并生成与文本相关的图像。通过对抗生成网络的训练,生成器可以学习到如何从文本中提取关键信息,并将其转化为图像。这种方法可以用于生成各种类型的图像,例如风景、人物、动物等。通过对抗训练,GAN可以产生高质量和多样性的图像。2016年以后,出现了一系列基于GAN的文本生成图像模型,如Stack-GAN、Attn-GAN、Big-GAN等。这些模型通过引入注意力机制、层次结构、条件信息等技术,提高了文本到图像的对齐度和精细度。

对抗生成网络(GAN)的原理简单示例

4. DELL-E带来的超大数据模型时代

2021年初,OpenAI基金会发布了基于一个12亿参数版本的GPT-3作为核心算法的AI模型:DALL-E。DALL-E使用了一个VQ-VAE,它是一种变分自编码器(variational autoencoder),可以将图像离散化为标记,并用Transformer将文本和标记进行联合编码,并利用自回归(autoregressive)的损失函数进行训练。简单来说,DALL-E的训练数据集包含了各种各样的图像和对应的描述文本,例如“一只黄色的猫坐在草地上”、“一只红色的火鸟在天空中飞翔”等等。模型通过学习这些图像和文本之间的关系,从而能够生成与给定文本描述相符的图像。在生成图像时,DALL-E首先将文本描述转化为一个向量表示,然后将该向量与一个随机噪声向量进行拼接,得到一个输入向量。接着,模型通过多层的卷积神经网络和反卷积神经网络,将输入向量转化为一个图像。在此过程中,模型通过最小化重构误差来提高生成图像的质量和多样性。

5. 扩散模型引领AI生成图像的热潮

2021年下半年开始,在OpenAI基金会发布了采用了扩散模型(Diffusion Model)的文本生成图像模型GLIDE之后,引发了AI生成图像领域的新热潮。随后,Midjourney平台通过其官方Discord机器人推出了文生图的在线服务(目前版本V5.1),Stable Diffusion软件提供了一个基于扩散模型的文本生成图像工具箱,OpenAI正式开放了DALL-E 2.0的文本生图功能……自此AI生图软件成了三足鼎立之势。尤其当Stability AI公司正式在网上开源Stable Diffusion的程序和模型后,任何人都可以利用自己电脑或网络上的服务器搭建绘画应用,生成任何自己希望生成的图像。

国内网上讨论AI绘画热度也基于此而来,考虑到目前乃至未来一段时间,基于扩散算法的Stable Diffusion将会是主流的民间AI生成图像算法,因此本文将会以该软件及其算法为例,探讨相关的法律问题。

三 扩散模型生成图像的原理

1. 模型与算法的关系

在说明扩散模型的原理之前,我想先介绍机器学习概念中“模型”与“算法”的关系。

在机器学习中,算法和模型是两个重要的概念。算法是指在数据上运行的一种过程,用于创建一个机器学习模型。机器学习算法可以从数据中“学习”,或者在数据集上“拟合”模型。在机器学习中,有许多不同的算法,如分类算法、回归算法和聚类算法等。

一旦机器学习算法完成训练,它会生成一个机器学习模型,该模型代表了算法从数据中学到的内容,包括规则、数字和其他算法特定的数据结构,用于进行预测。机器学习模型可以看作是一个“程序”,它包含数据和使用这些数据进行预测的过程。对于训练数据,我们通过运行机器学习算法生成模型,并将其保存,以便将来用于预测新的数据。

简单来说,算法是用于生成模型的过程,而模型则是算法的输出结果。在使用机器学习进行任务时,我们通常会选择一个合适的算法来生成一个能够在新数据上进行预测的模型。通过不断地运用机器学习算法,我们可以不断改进和优化生成的模型,以便更好地解决各种现实问题。[1]

在机器学习中,算法生成模型的过程可以概括为以下几个步骤:

-

数据收集: 收集用于训练模型的数据集。在生成图像范畴,数据集则是根据模型需求而收集而来的各种各样的图像。

-

数据预处理: 对数据进行清洗、转换、标准化、标记等操作,以便算法能够更好地理解和处理数据。

-

选择算法: 选择适合当前任务的机器学习算法。通常需要考虑算法的准确性、速度、复杂度和可解释性等方面。

-

训练模型: 使用选定的算法在数据集上训练模型。算法根据数据的特征和标签进行学习,并生成模型参数,如权重和偏差等。

-

模型评估: 使用评估指标对模型进行评估,以确定其在新数据上的表现。

-

模型部署: 使用训练好的模型对新数据进行预测或分类。

整个训练流程可以表示成以下的图表:

在这个流程图中,算法用于处理数据,并生成一个模型,同时又用于检测模型是否符合输出结果的预期。算法是模型的核心,模型是算法的结果。

2. 扩散算法的原理

扩散算法是一种生成模型,可以用来生成图像、文本、音频等数据。它的基本思想是将真实数据加入噪声,使其逐渐变得随机,然后再用一个去噪网络逆向重构出原始数据。

如同其他AIGC算法一样,扩散算法同样具有训练和采样(生成)的过程,无论训练或采样,均基于扩散过程(Diffusion Process)和去噪网络(Denoising Network)。扩散过程是将真实数据加入不断增大的高斯噪声,使其逐步变成随机噪声。去噪网络是一个可以根据当前的噪声水平,从已经加入噪声的数据中恢复出原始数据或更清晰的数据的神经网络。

训练时,需要给真实数据加入不同水平的噪声,然后用去噪网络来预测原始数据或下一步的数据,并用损失函数来衡量预测和真实值之间的差异。训练目标是使得去噪网络能够在任何噪声水平下都能尽可能地恢复出原始数据。

通俗来说,就是将一张图像逐步加入随机的噪点,直到把图像完全变成一张噪点图,然后再把这张噪点图通过随机增加像素点的方式,还原成一张普通的图像。去噪网络在其中会记录噪点的随机值,并通过“调整预测”的方式去判断如何“增加像素”才能更符合原本训练素材的“样式”。

当有了足够的训练,这个包含着“预测算法”的模型就正式宣告完成了。如果能“拆开”这个模型,就可以发现这个模型包含了“如何把一张噪声图变成一张普通图像”的大量“预测路径”,程序可以按着这些“路径”,生成各种各样符合的图像。

到了生成图像时,就需要从随机噪声开始,然后用去噪网络逐步减小噪声水平并预测下一步的减少方向(通过之前“路径”),并生成更清晰的数据。最终,当噪声水平为零时,我们就得到了生成的数据。

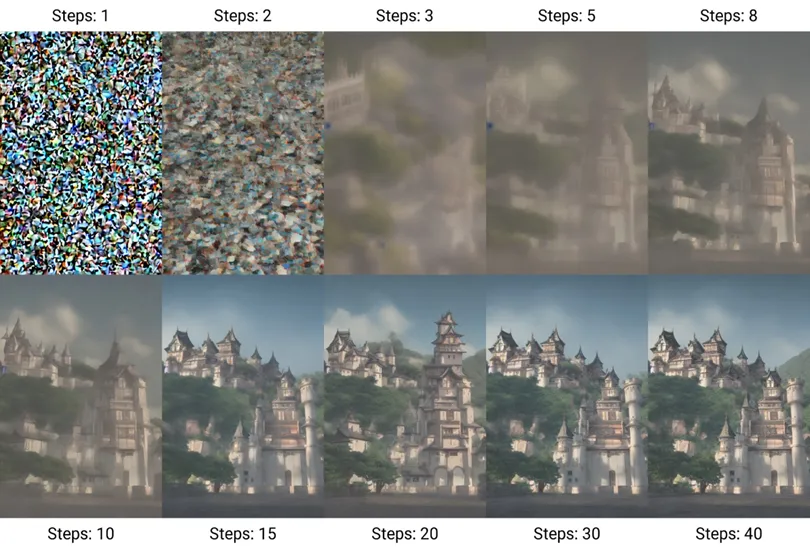

生成过程的示例,训练过程可以看作是生成的反向过程

3. 利用关键词(Prompt)生成特定AI图像

在原始的扩散模型中,就算训练好的模型,只能漫无目的地生成符合训练集规律的图像。如果训练集中充满了不同类型的图像,那最终生成的结果将会“什么都像”,但实际并不能让人看懂。

为了确保输出结果符合我们的预期,AI程序需要引入一个分类、判断的系统,来决定图像的最终生成方向。

以Stable Diffusion V2版本为例。

Stable Diffusion引入了一个叫OpenCLIP的文字模型作为文本编码器,这个模型通过高达3.5亿参数(“参数”可简单理解为“训练数据”)训练而来,每一个训练内容都由一张图像以及这张图像的描述而组成(实际上这些训练内容均是从网上“爬虫”图片以及其描述文本得来的,但本文不讨论训练集来源的合法性问题)。

CLIP的训练的训练过程可以简化为“判断文字描述是否符合图像”的过程,在对图像和文字各自通过图像编码器和文字编码器进行编码后,随机抽出结果然后不断判断两者是否相似,经过大量训练后,得出“哪种描述符合哪种图像”的模型结果。

当引入了文本模型后,在训练图像模型时则需要对图像进行标识,例如给一张图片标记“一只狗,草坪,飞盘”或者直接“一只狗在草坪上玩飞盘”(这个标记过程往往也会由AI完成),模型就会“学会”这样的图像具有“狗”“草坪”“飞盘”这三个元素,再通过其他图片训练时提供的标记,判断出具体什么元素是“狗”“草坪”“飞盘”。

当这种图像数量极为庞大时,AI就会“学会”生成这些元素的“路径”(预测噪声)的共性是什么,在生成时则可以通过我们输入的需求(“关键词”),寻找最符合的去噪方式,在每一步去噪的过程中,都会判断生成的内容是否符合关键词匹配的编码信息,最终直到把噪声图去噪(生成)为符合关键词内容的图像。

接下来通过一个示例展示Stable Diffusion的生成过程:

生成关键词:Golden Retriever,grass,(8k, RAW photo, best quality, masterpiece:1.2), (realistic, photo-realistic:1.37),cinematic lighting,best quality, ultra high res, (photorealistic:1.4),ultra-detailed, extremely detailed, CG unity 8k wallpaper,best illustration,high resolution, film grain, Fujifilm XT3

这是一组展示了如何通过20个采样迭代步数(Steps)生成图片的示例,生成关键词由金毛犬(Golden Retriever)、草(grass)以及一些用于指定真实画风的词语所组成,图中Steps后面的数字代表了下方图片是第几次迭代的结果。

第1次和第3次迭代时,可以看到只有一团橙色和一圈绿色的色块,说明AI首先认为“金毛犬”和“草”两种元素各自在训练集中的最大共性橙色和绿色,;到了第5次迭代,随着图像不断迭代(去噪),一只狗的轮廓已经可以被我们看到,但还有一些奇怪的色块在上面,草地也不够清晰,但已经可以分清草地和远处天空的区别;等到了第10次迭代已经可以明显看出了一只金毛犬在草地的样子了,之后的12次到20次迭代,是AI不断在细化、调整画面,让图像中的金毛犬和草地更符合训练集里“金毛犬”和“草地”的特征。

从迭代图例中,我们可以清楚地看到Stable Diffusion是如何从一团模糊不堪的色团,慢慢生成一张符合关键词内容的图像。虽然最终成品还有一点俗称的“油腻感”,可以明显看出和真实的照片有点一点区别,但恰恰是这种“油腻感”,证明了AI并不是从数据库中直接拼图,而是有着自己独特的生成图像方式。

四 从扩散模型的原理判断生成结果是否具备侵权性质

1. 扩散模型的训练和生成均是对“共性”的反映

从上述训练和生成的原理可见,扩散模型在训练和生成时,并不是单纯模仿数据集内某一图像的,如线条、构图、色彩等某个具体内容,更不是所谓的“从数据库中获取图像进行拼接”,而是通过分析某个元素在这些图像中的共性,从噪点图通过逐步去噪、调整,来生成出符合这种共性的图像结果。

AI并不会知道“苹果”的意思是什么,更不是在数据库中挑一个“苹果”稍加修改后展示出来,它只知道在噪声图中如何分配像素点,才能更符合数据集中标记成“苹果”的内容,其像素点在空间中的分部。

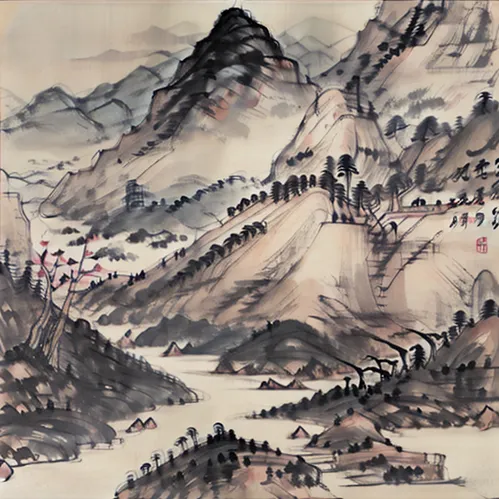

在一堆苹果图片中,它学到的是形状、色彩等“苹果”的共性;在一堆油画图中,它学到的是“油画”的质感、笔触等“油画”的共性;在某个画家的作品集中,它学到的是这个画家的色彩选择、构图等“画家画风”的共性。

附加水墨风格训练模型后,甚至能生成国画风格的图像

2. 一般情况下,基于“共性”创作的作品并不应视为对训练集作品的著作权的侵犯

绝大部分人类学习绘画的过程均是从临摹开始,在掌握了光影、线条、构图、结构、比例等“美”和“自然”的共性后,通过不断练习和调整,再逐渐创作出带有自己风格的作品,而Stable Diffusion为代表的扩散算法模型AI程序的训练和生成,恰恰复制了这个过程,而区别在于,人类学习的对象是通过眼睛观察到的自然规律以及他人作品,AI学习的对象是训练者提供的图像内容。

《中华人民共和国著作权法》中认为著作权人对作品拥有包括修改权、保护作品完整权、复制权、发行权等权利,但回顾扩散算法模型的训练过程,并没有对数据集作品进行任何形式的修改或破坏(学习过程中增加噪点的过程明显不算法律意义上的破坏),基于“共性”的生成过程也没有对原数据集中的作品进行任何复制、发行、展览。此外,目前法律并没有允许某个人对某种“画风”享有独占的权利,因此就算使用某作者的作品集进行对“画风”的训练,最终的作品也仅是在图像中结合这种“画风”中的美术共性,并非针对某个作品的抄袭。

3. 但“过拟合”可能会导致与训练作品过于相似,因此还应通过模型的具体情况进行判断

“欠拟合”和“过拟合”都是深度学习和机器学习模型中的错误训练结果,前者是因数据集太多且训练不足而导致无法生成具有“共性”的结果,后者则是因为数据集太少太专一而导致生成的结果与训练集内容过于相似。

正如人也无法想象没有见过的东西,假设一个训练集中只有5张不同标识的作品,最终经过多轮训练出来的模型出现“过拟合”,对于某个“元素”的理解均只有那5张作品,从而导致生成的作品将可能是那5张作品中某张的重现。

在这种情况下,就算生成的过程仍然是通过寻找“共性”去噪,但因“共性”完全来源于某个作品,将导致生成的内容会与该作品过于相似或完全一致,此时再以模型算法的原理,表示该生成作品不侵犯训练集作品的著作权权,明显缺乏说服力。

五 AI作品使用的算法模型应成为侵权审查的核心

通过上述分析,我们可以看出,不同的AI生成作品算法模型具有不同的工作原理。特别是当前流行的扩散模型,在正常情况下其生成的作品通常不会侵犯训练集中某个特定作品的权利。但如出现“过拟合”现象,或使用特定内容LoRA模型(Low-Rank Adaptation of Large Language Models,大语言模型的低阶适应)、低重绘度“图生图”方式等,导致与训练集原图或特定内容过于相似的情况,也需要单独讨论。

1. 仅因基于AI生成即认为作品侵权,忽视算法模型的实际原理,容易造成过度保护

AI生成作品与人工创作作品,在创作过程和原理上存在一定差异,但也有其共性。大多数AI生成作品算法模型的原理为,通过分析大量信息和数据,学习获得某个领域的“经验”或“共性”,并在此基础上进行新的作品创作,而非简单地复制、移植或黏合训练集中的某个具体作品。如果忽视这一技术原理,仅因某作品由AI生成就认定其侵权,不仅在本质上误解了AI的创作方式,也将不当地限制AI技术在文化创意领域的发展与应用。

以本文的范例来说,基于扩散算法生成的美术作品,是由机器通过学习大量同类型的美术作品中的“共性”与“规律”后,按使用者的需求,把不同内容的“共性”与“规律”依照最符合现实逻辑的方式进行组合,从而创作出新的作品。该过程并不直接取用某个具体的作品,本质与人类在学习“油画”的画技后绘制出新的“油画”作品,学习“水墨画”的画技后绘制出新的“水墨画”作品并无二致。

如果仅因作品由AI生成就将之定义为对训练集内作品构成侵权,等同于将某领域的所有的“共性”与“规律”都认定为归属于某人或某些群体所拥有,这无疑已经超出了《著作权法》的保护范畴,属于明显的过度保护。

在AI软件平民化的现在,任何人都可以通过AI软件生成自己想要的内容,而不需要长时间的学习来掌握某个专业技能,这将有助于普遍激发创意,使得“创作”的核心来到了“创”,避免因能力不足而无法“作”。但如认定某些“共性”和“规律”只能掌握在部分具有“作”能力的群体手中,认定基于学习这些内容的AI软件均为侵权,不仅不利于社会创意的发展,还将直接抹杀了AI技术的发展,毕竟AI与人类一样,无法想象(创作)未曾见过(训练)过的内容。

但除类似扩散性模型的算法外,也不排除存在真实组合训练集作品的算法。因此为避免过度保护,在判断AI作品是否侵权时,应重点考虑算法模型的实际工作原理,而非简单地因其AI生成的属性而作出定论。

2. 一概认定AI作品不侵权,忽略具体算法模型的使用情况,则可能保护不足

不同的AI生成作品算法模型在使用数据与信息的方式上存在差异,一些模型的工作原理是在训练数据集的基础上生成新的作品,如果训练数据包含某原有作品,而算法模型又无法有效避免过度使用该原有作品,生成的AI作品很有可能构成侵权。如果忽略算法模型的具体使用情况,一概认定AI生成的作品不会侵权,在此情况下原有作品权利人的权利可能无法得到合理保护。

例如,若某文本生成模型的训练语料中仅包含少量小说,甚至只包含某作者的小说作品时,当模型的算法无法避免直接移植或大量借鉴该小说的人物、情节与语言时,其生成的AI作品很可能侵犯原小说权利人的专有权利。此时,如果简单地因其为AI作品而认定其不侵权,实际是忽视了算法模型过度依赖和使用原有作品的事实,导致对原有作品权利的保护不足。

同理,如基于扩散性模型的Stable Diffusion,也支持载入LoRA模型来使得生成作品更偏向某种结果;许多使用者在制作LoRA模型时,可能会选择基于某个特定真实人物或虚拟角色进行训练,使得最终AI作品生成的作品包含了该人物或角色,导致该AI画作也存在侵犯他人肖像权或某角色著作权的可能性。如果因其基于扩散性算法生成而一概否认此类作品的侵权可能,则无疑忽视了算法模型使用数据的具体方式与使用者的侵权恶意,无法对权利人提供应有保护。

为确保权利人权利得到应有保护,在判断AI作品侵权与否时,应切实考量其生成所基于的算法模型在训练与使用数据时的具体方式,对可能导致保护不足的情形给予应有重视。

3. 以算法模型为核心,确认AI技术的法律地位,有助于AI技术与法律良性互动

算法模型作为AI生成作品的技术基础,其法律地位目前尚不明确,目前仅有《互联网信息服务算法推荐管理规定》对“算法推荐”这一用途进行规范,而拟定中的《生成式人工智能服务管理办法(征求意见稿)》因与生成式人工智能的现实情况存在巨大差异而被业界讨论甚多。若算法模型能获得符合实际的法律规范,如认定训练集的是否属于合理使用、生成作品的著作权归属、算法制作者与使用者的具体权利义务等,将有助于AI模型开发者和使用者了解相关法律风险,激励更多开发者与投资者投入创新性算法模型的研发,将推动AI技术在更广泛的领域内发展应用,使其在各类产业中的作用进一步彰显。

同时,就目前网上关于人工智能技术的探讨文章可见,当前法学界存在对人工智能等新兴技术的理解还不足够深入的情况,这可能导致一些法律分析、法律观点在未充分理解新技术原理与特点的情况下就下定论,其结论可能过于主观并忽略客观因素。相比过往技术,人工智能等新兴技术在结合了算法模型等内容后,往往更加复杂与难以理解,这使得相关法律分析面临较大挑战。以人工智能创作的作品为例,如果不能理解不同算法模型的工作方式与原理,将很难在涉及其侵权判断与保护等方面作出恰当的分析与结论。再者,如果法学界不能及时跟上新技术发展的步伐,充分理解其内在原理和特性,很容易在制定法规、进行司法判断或风险分析时因难以精准把握新技术实质而产生偏差,可能会形成法律过于领先于技术发展的状况,阻碍新技术的应用与推广。

如法学界对算法模型的原理进行充分的学习与研究,明确以算法模型为核心的侵权判断方法,将有助于法学界对人工智能技术作出更严谨的判断,研制更符合实际的法律法规,将可以降低人工智能技术开发者和使用者的风险,激励更多创新性算法模型的设计与开发,推动AI技术在更广泛领域的应用,特别是在文化创意产业中的进一步发展。这不仅有利于AI产业自身的蓬勃发展,也将丰富人类的精神生活和推动社会进步。

[1]《Difference Between Algorithm and Model in Machine Learning》https://machinelearningmastery.com/difference-between-algorithm-and-model-in-machine-learning/

1. Introduction

The rapid development and widespread application of Artificial Intelligence (AI) technology is profoundly changing human production methods and lifestyles. In the cultural and creative field, AI technology is also widely applied in music, painting, literature, and other creation fields. For example, AI-generated music, paintings, and literary works have been publicly displayed and even appeared at auctions. However, AI technology has also brought challenges in intellectual property protection. Unlike traditional cultural and creative fields, AI technology-generated works have complex technical algorithms behind them—from AI technology training to final work generation, various algorithms are used, and how to determine whether AI-generated content (AIGC) based on these algorithms will involve intellectual property infringement has become an urgent problem to solve.

This article starts from the perspective of machine learning algorithm models, explores how to review whether content generated by an AI technology infringes, and uses AI-generated image software Stable Diffusion using diffusion models and algorithms as an example to explore “the importance of determining that AI work infringement issues should be centered on machine learning algorithm models,” providing certain ideas and references for the work of determining whether AI works infringe intellectual property. Additionally, this article only explores the basic implementation principles of algorithms and models, not their specific implementation methods and mathematical formulas.

2. Introduction to AI Image Generation History

Since the 1950s, computer graphics has been one of the research focuses in the artificial intelligence field. With the continuous upgrading of computer hardware and the rapid development of deep learning algorithms, people began exploring how to use artificial intelligence technology to generate images. In the early 1990s, people began using rule-based methods to generate simple geometric figures—these methods included fractal images and L-systems. However, these methods could only generate basic geometric shapes and could not generate complex images. It was not until the early 21st century that people began trying to use machine learning-based methods to generate images, and the concept of “AI-generated images” entered the public eye.

The important history of AI-generated images since the 21st century can be roughly divided into the following stages:

1. The Dawn Brought by Deep Learning

In 2006, Geoffrey Hinton and his students invented an engineering method for optimizing deep neural networks using computer graphics cards (GPUs), and published papers in Science and related journals—this was the first time the new concept of “Deep Learning” appeared. They utilized GPUs’ parallel computing capability to distribute neural network computing tasks across multiple processing units for parallel processing, thereby accelerating neural network training and inference processes. This method greatly improved neural network computing speed, laid the foundation for deep learning development, and also reduced AI image generation computing costs and time costs to an “acceptable” level.

Geoffrey Hinton and his two freshly graduated students—Alex Krizhevsky and Ilya Sutskever

2. The Autoencoder Era

In the mid-2010s, with the development of deep neural networks, some text-to-image generation models based on Autoencoders (AE) and Variational Autoencoders (VAE) emerged. They were usually used for unsupervised learning scenarios—that is, learning image features and representations from large amounts of image data without explicit image labels or annotations. An autoencoder is a neural network model composed of an encoder and decoder. The encoder compresses input data into a low-dimensional vector, and the decoder restores the vector to original data. In text-to-image generation tasks, input data can be a text description—the encoder compresses it into a vector, and the decoder restores the vector into an image. A variational autoencoder is an improved model based on autoencoders—it can not only compress input data but also generate new data. VAE introduces a latent variable in the encoder to represent latent features of input data, such as color, texture, shape, etc.—the decoder generates new data through latent variables. In text-to-image generation tasks, latent variables can represent image styles or features, and the decoder can generate different images based on different latent variables.

3. GAN—The Expert in Adversarial Combat

In 2014, Goodfellow et al. proposed Generative Adversarial Networks (GAN), opening a new era. GAN consists of a generator and a discriminator. The generator receives a random noise vector as input and generates an image; the discriminator receives an image (possibly a real image or a “fake” image generated by the generator) as input and outputs a value indicating whether the image is real or generated. The generator and discriminator improve their performance through adversarial training—the generator’s goal is to generate images as realistic as possible, while the discriminator’s goal is to distinguish real images from generated images as accurately as possible. During training, the generator and discriminator compete, continuously adjusting their parameters until the generator can generate images realistic enough that the discriminator cannot distinguish real from generated. After training completes, the generator can receive any text as input and generate text-related images. Through GAN adversarial training, the generator learns to extract key information from text and transform it into images. This method can be used to generate various types of images—landscapes, people, animals, etc. Through adversarial training, GAN can produce high-quality and diverse images. After 2016, a series of GAN-based text-to-image generation models appeared, such as Stack-GAN, Attn-GAN, Big-GAN, etc. These models improved text-to-image alignment and precision by introducing attention mechanisms, hierarchical structures, conditional information, and other techniques.

Simple example of GAN principles

4. The Era of Ultra-Large Data Models Brought by DELL-E

In early 2021, the OpenAI Foundation released DALL-E—an AI model using a 1.2 billion parameter version of GPT-3 as the core algorithm. DALL-E used a VQ-VAE, which is a variational autoencoder that discretizes images into tokens and uses Transformers for joint encoding of text and tokens, trained using autoregressive loss functions. Simply put, DALL-E’s training dataset contains various images and corresponding descriptive texts, such as “a yellow cat sitting on grass,” “a red fire bird flying in the sky,” etc. The model learns the relationship between these images and texts, thereby generating images matching given text descriptions. When generating images, DALL-E first transforms the text description into a vector representation, then concatenates this vector with a random noise vector to get an input vector. Then, through multi-layer convolutional and deconvolutional neural networks, the model transforms the input vector into an image. During this process, the model improves generated image quality and diversity by minimizing reconstruction error.

5. Diffusion Models Lead the AI Image Generation Boom

Starting from the second half of 2021, after the OpenAI Foundation released GLIDE—a text-to-image generation model using diffusion models (Diffusion Model)—it triggered a new upsurge in the AI image generation field. Subsequently, the Midjourney platform launched its text-to-image online service through its official Discord bot (currently version V5.1), Stable Diffusion software provided a text-to-image generation toolbox based on diffusion models, and OpenAI officially opened DALL-E 2.0’s text-to-image function… Since then, AI image generation software has formed a tripartite confrontation. Especially after Stability AI officially open-sourced Stable Diffusion programs and models online, anyone could use their own computer or network servers to build drawing applications, generating any image they wanted.

The domestic online discussion of AI painting popularity is based on this. Considering that Stable Diffusion based on diffusion algorithms will be the mainstream civilian AI image generation algorithm at present and for some time to come, this article will use this software and its algorithm as an example to explore related legal issues.

3. Principles of Diffusion Models Generating Images

1. The Relationship Between Models and Algorithms

Before explaining the principles of diffusion models, I want to introduce the relationship between “models” and “algorithms” in machine learning concepts.

In machine learning, algorithms and models are two important concepts. An algorithm is a process that runs on data to create a machine learning model. Machine learning algorithms can “learn” from data or “fit” models on datasets. In machine learning, there are many different algorithms, such as classification algorithms, regression algorithms, and clustering algorithms.

Once a machine learning algorithm completes training, it generates a machine learning model that represents what the algorithm has learned from the data, including rules, numbers, and other algorithm-specific data structures used for prediction. A machine learning model can be viewed as a “program” containing data and processes for using that data to make predictions. For training data, we generate models by running machine learning algorithms and save them for future use in predicting new data.

Simply put, algorithms are processes for generating models, while models are the output results of algorithms. When using machine learning for tasks, we usually select a suitable algorithm to generate a model capable of predicting new data. Through continuous use of machine learning algorithms, we can continuously improve and optimize generated models to better solve various real-world problems. [1]

In machine learning, the process of algorithms generating models can be summarized into the following steps:

-

Data Collection: Collect datasets for training models. In the image generation field, datasets are various images collected based on model requirements.

-

Data Preprocessing: Clean, transform, standardize, and label data so algorithms can better understand and process it.

-

Algorithm Selection: Select machine learning algorithms suitable for the current task. Usually need to consider algorithm accuracy, speed, complexity, and interpretability.

-

Model Training: Train models on datasets using selected algorithms. Algorithms learn based on data features and labels and generate model parameters such as weights and biases.

-

Model Evaluation: Evaluate models using evaluation metrics to determine their performance on new data.

-

Model Deployment: Use trained models to predict or classify new data.

The entire training process can be represented as the following diagram:

In this flowchart, algorithms process data and generate a model, while also used to detect whether models meet output expectations. Algorithms are the core of models; models are the results of algorithms.

2. Principles of Diffusion Algorithms

Diffusion algorithms are generative models used to generate images, text, audio, and other data. Their basic idea is to add noise to real data, making it gradually become random, then use a denoising network to reversely reconstruct original data.

Like other AIGC algorithms, diffusion algorithms also have training and sampling (generation) processes—both based on Diffusion Process and Denoising Networks. The diffusion process adds continuously increasing Gaussian noise to real data, making it gradually become random noise. A denoising network is a neural network that can, based on current noise levels, restore original or clearer data from noise-added data.

During training, it is necessary to add different levels of noise to real data, then use the denoising network to predict original data or next-step data, and use loss functions to measure differences between predictions and true values. The training objective is to enable the denoising network to restore original data as much as possible at any noise level.

Simply speaking, this is adding random noise points to an image step by step until the image completely becomes a noise point image, then restoring this noise point image to an ordinary image through randomly adding pixels. The denoising network records random values of noise points and judges how to “add pixels” to better match the “style” of original training materials through “adjusted predictions.”

When sufficient training is completed, the model containing “prediction algorithms” is officially announced as complete. If this model can be “unpacked,” it can be found that the model contains numerous “prediction paths” for “how to transform a noise image into an ordinary image”—programs can follow these “paths” to generate various matching images.

When generating images, it is necessary to start from random noise, then use the denoising network to gradually reduce noise levels and predict the next reduction direction (through previous “paths”), generating clearer data. Finally, when the noise level reaches zero, we get generated data.

Example of generation process—training process can be viewed as the reverse of generation

3. Using Keywords (Prompts) to Generate Specific AI Images

In original diffusion models, even trained models can only generate images conforming to training set patterns without purpose. If the training set is full of different types of images, the final generation results will “resemble everything” but actually cannot be understood.

To ensure output results meet our expectations, AI programs need to introduce a classification and judgment system to determine the final generation direction of images.

Using Stable Diffusion V2 as an example.

Stable Diffusion introduced a text model called OpenCLIP as a text encoder. This model was trained through 350 million parameters (“parameters” can be simply understood as “training data”). Each training content consists of an image and that image’s description (actually, these training contents were obtained by “crawling” images and their descriptive texts from the internet, but this article does not discuss the legality of training set sources).

CLIP’s training process can be simplified as the process of “judging whether text descriptions match images”—after encoding images and text through image and text encoders respectively, results are randomly sampled and continuously judged for similarity, and after extensive training, the model result of “which description matches which image” is derived.

After introducing text models, when training image models, it is necessary to label images—for example, labeling an image as “a dog, lawn, frisbee” or directly “a dog playing frisbee on a lawn” (this labeling process is often also done by AI). The model will then “learn” that this image has the three elements of “dog,” “lawn,” and “frisbee,” and through labels provided during training with other images, judge what specifically constitutes “dog,” “lawn,” and “frisbee.”

When the quantity of such images becomes extremely large, AI will “learn” what the commonality of “prediction paths” for these elements is. During generation, it can use user-input requirements (“keywords”) to find the most matching denoising method—at each denoising step, it judges whether generated content matches keyword-matched encoding information, until finally denoising (generating) the noise image into an image matching keyword content.

Next, demonstrate Stable Diffusion’s generation process through an example:

Generation prompt: Golden Retriever, grass, (8k, RAW photo, best quality, masterpiece:1.2), (realistic, photo-realistic:1.37), cinematic lighting, best quality, ultra high res, (photorealistic:1.4), ultra-detailed, extremely detailed, CG unity 8k wallpaper, best illustration, high resolution, film grain, Fujifilm XT3

This is a set demonstrating how to generate images through 20 sampling iteration steps. The generation prompt consists of Golden Retriever, grass, and some words for specifying realistic painting style. The numbers after Steps in the figure represent which iteration result the image below is.

During the 1st and 3rd iterations, you can only see a mass of orange and a circle of green color blocks—indicating AI first recognizes that the biggest commonalities of “Golden Retriever” and “grass” elements in the training set are orange and green. By the 5th iteration, as the image continuously iterates (denoises), the outline of a dog can be seen, but there are still some strange color blocks on it, and the grass is not clear enough, but the difference between grass and distant sky can already be distinguished. By the 10th iteration, a Golden Retriever on grass can be clearly seen. The subsequent 12th to 20th iterations are AI continuously refining and adjusting the image, making the Golden Retriever and grass in the image more match the characteristics of “Golden Retriever” and “grass” in the training set.

From the iteration examples, we can clearly see how Stable Diffusion gradually generates an image matching keyword content from a blurry mass of colors. Although the final product still has a bit of the commonly called “oily feeling” and can be clearly distinguished from real photos, this “oily feeling” precisely proves that AI does not directly collage from database images but has its own unique image generation method.

4. Determining Whether Generation Results Have Infringement Nature from Diffusion Model Principles

1. Both Diffusion Model Training and Generation Reflect “Commonalities”

From the above training and generation principles, diffusion models do not simply imitate a specific image in the dataset during training and generation—such specific content as lines, composition, or color. Nor is it the so-called “obtaining images from a database for collage”—but analyzing the commonalities of certain elements across these images, gradually denoising from noise images and adjusting to generate results matching these commonalities.

AI does not know the meaning of “apple,” nor does it select an “apple” from the database and slightly modify it for display. It only knows how to allocate pixels in a noise image to more match content labeled as “apple” in the dataset—the distribution of pixels in space.

From a stack of apple images, it learns the commonalities of “apples” such as shape and color; from a stack of oil paintings, it learns the “oil painting” commonalities of texture and brushstrokes; from a collection of a certain painter’s works, it learns that painter’s color choices and compositional commonalities.

After adding ink painting style training models, it can even generate images in Chinese ink painting style

2. Under Normal Circumstances, Works Created Based on “Commonalities” Should Not Be Viewed as Infringement of Training Set Works’ Copyright

The vast majority of human painting learning processes start from copying. After mastering “beauty” and “nature” commonalities such as light and shadow, lines, composition, structure, and proportion, through continuous practice and adjustment, they gradually create works with their own styles. Stable Diffusion’s diffusion algorithm model AI program’s training and generation precisely复制 (replicates) this process—the difference is that human learning objects are natural laws observed through eyes and others’ works, while AI learning objects are image content provided by trainers.

China’s Copyright Law believes that copyright holders have rights over works including modification rights, work integrity protection rights, reproduction rights, distribution rights, etc. But reviewing diffusion algorithm model training processes, no form of modification or damage to dataset works has occurred (the process of adding noise during learning clearly does not constitute damage in the legal sense). The “commonality”-based generation process has not reproduced, distributed, or exhibited original dataset works. Additionally, current law does not allow any person to exclusively own a certain “painting style.” Therefore, even if training using a certain author’s work collection for “painting style,” the final work only combines art commonalities of that “style” in the image—not plagiarism targeting a specific work.

3. However, “Overfitting” May Cause Excessive Similarity to Training Works—Thus Should Be Judged Based on Specific Model Situations

Both “underfitting” and “overfitting” are erroneous training results in deep learning and machine learning models. The former is due to excessive data and insufficient training leading to inability to generate “commonality” results; the latter is due to too little and too specific data causing generated results to be overly similar to training set content.

Just as humans cannot imagine things they have never seen, assuming a training set has only 5 differently labeled works, after multiple rounds of training, the model will experience “overfitting”—understanding of a certain “element” comes only from those 5 works, causing generated works to possibly be reproductions of one of those 5 works.

In this situation, even if the generation process still denoises by finding “commonality,” because “commonality” completely originates from a certain work, generated content will be overly similar or completely consistent with that work. At this point, using model algorithm principles to state that the generated work does not infringe training set works’ copyright clearly lacks persuasiveness.

5. Algorithm Models Used in AI Works Should Be the Core of Infringement Review

Through the above analysis, we can see that different AI-generated work algorithm models have different working principles. Especially current popular diffusion models—under normal circumstances, their generated works usually do not infringe rights of any specific work in the training set. However, situations like “overfitting” phenomena, or using specific content LoRA models (Low-Rank Adaptation of Large Language Models) or low redrawing degree “image-to-image” methods causing excessive similarity to original training set images or specific content also need separate discussion.

1. Simply Considering Works Infringing Because They Are AI-Generated, Ignoring Actual Principles of Algorithm Models, Easily Causes Over-Protection

AI-generated works and manually created works have certain differences in creation processes and principles, but also have commonalities. Most AI-generated work algorithm models’ principles are: analyzing large amounts of information and data to learn “experience” or “commonality” in a certain field, and creating new works on this basis—not simply copying, transplanting, or pasting a specific work from training sets. If this technical principle is ignored and certain works are determined as infringing simply because they are AI-generated, not only is the AI’s creation method misunderstood in essence, but also the development and application of AI technology in cultural and creative fields is improperly restricted.

Using this article’s example, art works generated based on diffusion algorithms are created by machines learning “commonality” and “patterns” from large amounts of same-type art works, then combining different content’s “commonality” and “patterns” according to the most realistic logical approach based on user needs, thereby creating new works. This process does not directly use a specific work—its essence is no different from humans learning “oil painting” techniques to create new “oil painting” works, or learning “Chinese ink painting” techniques to create new “Chinese ink painting” works.

If works generated by AI are simply defined as constituting infringement of training set works, it equates to all “commonality” and “patterns” in a field belonging to certain people or groups—which has undoubtedly exceeded the protection scope of Copyright Law, constituting obvious over-protection.

In the current era of AI software popularization, anyone can generate content they want through AI software without long-term learning to master a professional skill. This helps universally spark creativity, bringing the core of “creation” to “creating”—avoiding inability to “create” due to insufficient ability. But if certain “commonality” and “patterns” are determined to only be mastered by some groups with “creation” ability, and AI software based on learning these are all determined as infringing, not only is social creative development hindered, but AI technology development is directly eliminated. After all, AI, like humans, cannot imagine (create) content they have not seen (trained).

However, besides algorithms similar to diffusion models, algorithms that truly combine training set works are not excluded. Therefore, to avoid over-protection, when judging whether AI works infringe, focus should be on actual working principles of algorithm models, rather than simply making determinations based on their AI-generated attribute.

2. Simply Determining AI Works Do Not Infringe, Ignoring Specific Algorithm Model Usage Situations, May Result in Insufficient Protection

Different AI-generated work algorithm models have differences in how they use data and information. Some models’ working principles are generating new works based on training datasets. If training data includes certain original works and algorithm models cannot effectively avoid overusing those original works, generated AI works likely constitute infringement. If specific algorithm model usage situations are ignored and AI-generated works are simply determined as not infringing, in this situation, original work rights holders’ rights may not be reasonably protected.

For example, if a text generation model’s training corpus contains only a small number of novels, or even only a certain author’s novel works, when the algorithm cannot avoid directly transplanting or heavily borrowing that novel’s characters, plot, and language, its generated AI work likely infringes the original novel rights holder’s exclusive rights. At this point, simply determining it as non-infringing because it is an AI work actually ignores the fact that algorithm models over-rely on and use original works, resulting in insufficient protection of original works’ rights.

Similarly, Stable Diffusion based on diffusion models also supports loading LoRA models to make generated works more biased toward certain results. Many users, when making LoRA models, may choose to train based on specific real people or virtual characters, causing generated AI works to contain those people or characters—resulting in such AI paintings also having possibilities of infringing others’ portrait rights or certain characters’ copyright. If such works’ infringement possibility is simply denied because they are based on diffusion algorithms, it undoubtedly ignores specific data usage methods of algorithm models and users’ infringement intent, failing to provide due protection for rights holders.

To ensure rights holders’ rights receive due protection when judging AI work infringement, actual methods of algorithm models in training and using data should be genuinely considered, and situations possibly leading to insufficient protection should be given due attention.

3. Taking Algorithm Models as the Core, Confirming AI Technology’s Legal Status, Helps Benign Interaction Between AI Technology and Law

Algorithm models, as the technical foundation of AI-generated works, currently have unclear legal status. Currently, only the “Management Provisions on Internet Information Service Algorithm Recommendation” regulates the “algorithm recommendation” usage, while the “Administrative Measures for Generative Artificial Intelligence Services (Draft for Comment)” being formulated has been extensively discussed in the industry due to huge differences from the reality of generative artificial intelligence. If algorithm models can obtain legally normative regulations fitting reality—such as determining whether training sets constitute fair use, copyright attribution of generated works, and specific rights and obligations of algorithm creators and users—it will help AI model developers and users understand related legal risks, encourage more developers and investors to invest in innovative algorithm model R&D, promote AI technology’s development and application in broader fields, and further highlight its role in various industries.

Additionally, from current online discussions on artificial intelligence technology, there is insufficient deep understanding of emerging technologies like artificial intelligence in the current legal field. This may cause some legal analyses and viewpoints to reach conclusions without fully understanding new technology principles and characteristics—their conclusions may be overly subjective and ignore objective factors. Compared to past technologies, emerging technologies like artificial intelligence, after combining algorithm models and other content, are often more complex and difficult to understand. This makes related legal analyses face considerable challenges. Taking AI-created works as an example—if different algorithm models’ working methods and principles cannot be understood, it will be difficult to make appropriate analyses and conclusions regarding infringement judgments and protection. Moreover, if the legal field cannot timely keep pace with new technology development, fully understanding its internal principles and characteristics, it will be easy to produce deviations when formulating regulations, making judicial judgments, or conducting risk analyses due to difficulty precisely grasping new technology essentials—possibly forming a situation where law is too far ahead of technology development, hindering new technology application and promotion.

If the legal field conducts sufficient learning and research on algorithm model principles and clarifies infringement judgment methods with algorithm models as the core, this will help the legal field make more rigorous judgments on artificial intelligence technology, formulate more realistic laws and regulations, reduce risks for AI technology developers and users, encourage more innovative algorithm model design and development, and promote AI technology’s application in broader fields—especially further development in cultural and creative industries. This not only benefits the AI industry’s own vigorous development but also enriches human spiritual life and promotes social progress.

[1] 《Difference Between Algorithm and Model in Machine Learning》https://machinelearningmastery.com/difference-between-algorithm-and-model-in-machine-learning/